Zana Buçinca, Maja Barbara Malaya, and Krzysztof Z. Gajos. To Trust or to Think: Cognitive Forcing Functions Can Reduce Overreliance on AI in AI-assisted Decision-making. Proceedings of the ACM on Human-Computer Interaction, 5(CSCW1), 2021.

[Abstract, BibTeX, etc.]

Maia Jacobs, Jeffrey He, Melanie F. Pradier, Barbara Lam, Andrew C. Ahn, Thomas H. McCoy, Roy H. Perlis, Finale Doshi-Velez, and Krzysztof Z. Gajos. Designing AI for Trust and Collaboration in Time-Constrained Medical Decisions: A Sociotechnical Lens. In Proceedings of the 2021 CHI Conference on Human Factors in Computing Systems, New York, NY, USA, 2021. Association for Computing Machinery.

[Abstract, BibTeX, Blog post, etc.]

Maia Jacobs, Melanie F. Pradier, Thomas H. McCoy Jr, Roy H. Perlis, Finale Doshi-Velez, and Krzysztof Z. Gajos. How machine-learning recommendations influence clinician treatment selections: the example of the antidepressant selection. Translational Psychiatry, 11, 2021.

[Abstract, BibTeX, Blog post, etc.]

Zana Buçinca, Phoebe Lin, Krzysztof Z. Gajos, and Elena L. Glassman. Proxy Tasks and Subjective Measures Can Be Misleading in Evaluating Explainable AI Systems. In Proceedings of the 25th International Conference on Intelligent User Interfaces. ACM, 2020. To appear.

[Abstract, BibTeX, etc.]

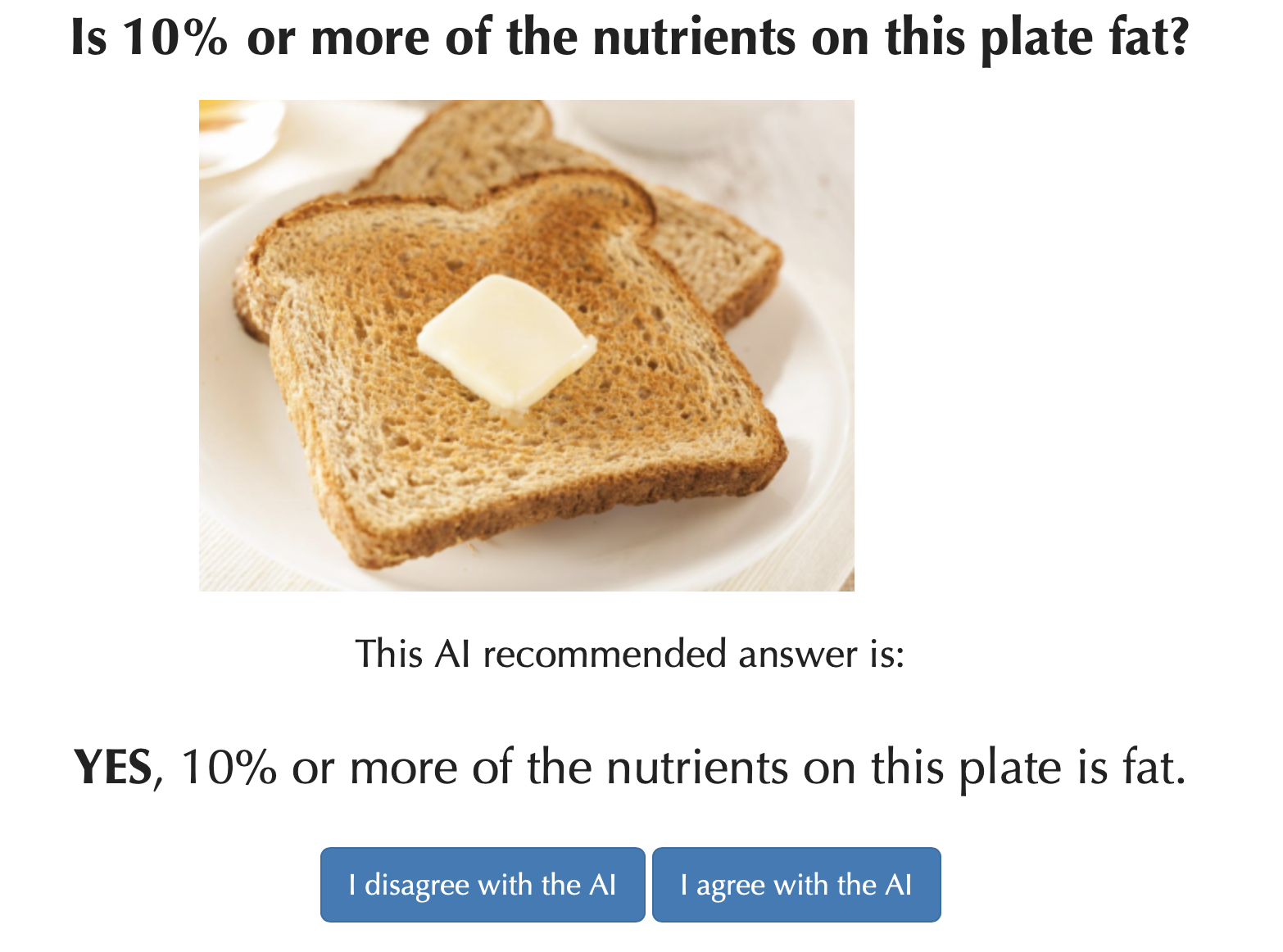

AI-powered decision support tools form part of sociotechnical systems, that is human+AI teams tasked with making decisions. Because people and AI-powered systems have complementary strengths, many expected that human+AI teams would perform better on decision-making tasks than either people or AIs alone. However, there is mounting evidence that human+AI teams often perform worse than AIs alone. Building on insights from both machine learning and cognitive science, we are developing new general principles and specific solutions to overcome human overreliance on the AI and to help human+AI teams make higher-quality and more confident decisions than what existing systems enable.

AI-powered decision support tools form part of sociotechnical systems, that is human+AI teams tasked with making decisions. Because people and AI-powered systems have complementary strengths, many expected that human+AI teams would perform better on decision-making tasks than either people or AIs alone. However, there is mounting evidence that human+AI teams often perform worse than AIs alone. Building on insights from both machine learning and cognitive science, we are developing new general principles and specific solutions to overcome human overreliance on the AI and to help human+AI teams make higher-quality and more confident decisions than what existing systems enable.